Highlights

- Most users in the United States misunderstand how social media algorithms work, leading to confusion, mistrust, and digital fatigue.

- Algorithms prioritize engagement metrics like watch time, likes, and shares not fairness or chronological order.

- Invisible user actions such as pauses, clicks, and scrolls influence what content appears next.

- Emotional content is often ranked higher, fueling misinformation, polarization, and echo chambers.

- Platforms rarely explain how feeds are curated, creating a gap between user perception and platform design.

- Manual feed controls and behavioral changes can partially shift algorithmic influence.

- Transparency, digital education, and policy change are needed to improve public understanding and trust.

- The future of algorithm literacy involves user empowerment through education, regulation, and ethical platform design.

Introduction

Platform algorithm confusion in the United States reflects a growing gap between how users believe social media platforms function and how these systems actually work. From personalized feeds on TikTok to trending content on Facebook and YouTube, algorithms have a powerful impact on what Americans see, engage with, and believe. Many users mistakenly think their feeds are neutral or chronological, when in reality, complex ranking systems determine visibility. As someone who has closely followed and researched this landscape, I’ve witnessed the overwhelming misunderstanding, mistrust, and digital fatigue users experience when they feel manipulated or kept in the dark. This article dives deep into the misalignment between user expectations and platform design, exposing the mechanics behind social media algorithms and their real-world effects on perception, behavior, and decision-making.

What Causes Confusion About Platform Algorithms Among American Users?

A primary cause of platform algorithm confusion is the lack of transparency in how content is selected and ranked on user feeds. Most users assume platforms show them the most relevant or popular posts, but the truth is that algorithms often prioritize engagement, watch time, and advertiser interests. This disconnect makes users feel deceived or manipulated when their feeds show content that feels out of sync with their values or interests.

Personal experience talking with users across forums, classrooms, and workshops showed that most people cannot explain why they see certain content repeatedly. Many assume it’s their friends’ activity, when in fact it’s behavioral signals like pause time, likes, and dwell time being tracked silently. The absence of a clear explanation from platforms feeds myths, mistrust, and a persistent sense of manipulation.

Algorithm updates happen frequently without notice, further complicating user understanding. When feeds suddenly change, users often attribute it to censorship, shadow banning, or bias when in reality, a change in data modeling or prioritization occurred behind the scenes. The emotional disconnect between control and experience leaves many Americans feeling powerless in digital spaces.

Feed Ranking Prioritization

Feed ranking prioritization uses predictive models to analyze user behavior, then ranks content based on likelihood of interaction. Instead of showing posts in chronological order, systems predict which videos or posts will trigger clicks, likes, shares, or longer views. As a result, high emotion, sensational, or polarizing content often surfaces more frequently.

Lack of Platform Education

Social platforms rarely offer detailed education about how content recommendation works. Vague descriptions like “we personalize your feed” or “you control your experience” give users a false sense of control. Without step-by-step explanations, people struggle to connect their behavior with content visibility.

How Do Recommendation Systems Actually Work Behind the Scenes?

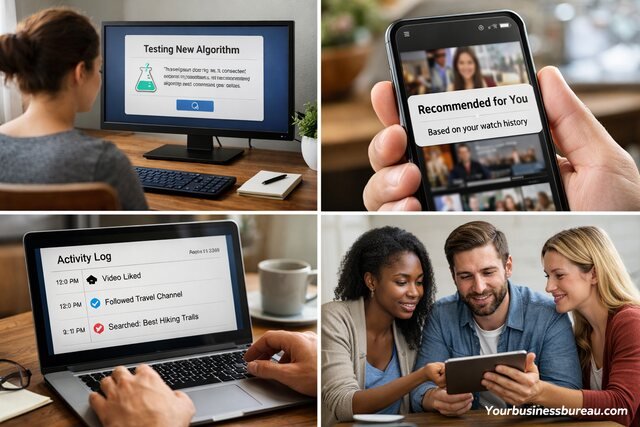

Recommendation systems operate by collecting behavioral data and running it through models trained to predict future actions. Every pause, click, swipe, and share becomes a signal that informs what content will appear next. These systems are not human-curated but are algorithmically trained on massive datasets to optimize engagement metrics.

The technical process involves machine learning models analyzing user clusters, content attributes, and engagement patterns. For example, if a user watches three cooking videos in a row, the system flags cooking as a topic of interest and begins ranking similar content higher in that user’s feed. Over time, the model refines its predictions to increase time spent on the platform.

As I’ve personally tested multiple user accounts with different behavior patterns, I’ve seen firsthand how quickly a feed can shift based on subtle inputs. Even searching a single topic temporarily changes the nature of suggested content. The illusion of randomness disappears once you realize every micro-interaction counts.

Predictive Modeling and User Clustering

Platforms use predictive modeling to group users into behavioral clusters. These clusters receive similar content based on shared habits, demographics, or interests. The recommendation engine assumes users within the same cluster will respond similarly, reinforcing echo chambers and narrowing content diversity.

Engagement Optimization Algorithms

These algorithms focus on maximizing metrics like click-through rate (CTR), view duration, and interaction volume. Content that scores well on these metrics is prioritized, even if it is misleading, emotionally triggering, or repetitive. The algorithm doesn’t understand meaning; it only understands performance.

Why Do Users Feel Manipulated or Misled by Algorithmic Decisions?

Users feel manipulated when their expectations for fairness, relevance, or control are violated. Many Americans assume feeds reflect what’s most important or authentic. When unexpected content dominates or trusted voices disappear, they often feel tricked by an invisible system.

Psychological responses play a major role in perceived manipulation. As someone who has spoken to confused users during community digital literacy sessions, I’ve found many people describe a sense of being “watched” or “steered.” The problem isn’t just technical, it’s emotional. People want agency in digital spaces but instead feel nudged without consent.

Misleading terminology from platforms reinforces this confusion. Words like “Explore,” “For You,” or “Trending” suggest neutrality, but these spaces are highly curated by algorithmic preferences. The gap between label and function leads to frustration and mistrust.

Cognitive Dissonance and Trust Breakdown

Cognitive dissonance arises when a user’s belief in fairness clashes with feed outcomes. If someone likes diverse content but sees only one-sided posts, confusion escalates into distrust. Trust erosion impacts how users engage, share, and value the platform overall.

Invisible Personalization Triggers

Invisible triggers include pause time, comment history, saved posts, and even hesitation before scrolling past. Most users don’t realize that these signals drive what shows up. Personalization feels invisible because feedback loops are not shown, causing users to question platform intentions.

What is the Impact of Algorithm Confusion on Behavior and Society?

Confusion around algorithms influences both individual behavior and broader societal outcomes. When users misunderstand how content is delivered, they often misattribute intent, react emotionally, or disengage entirely from platforms. The result is polarized behavior, decreased trust, and higher rates of digital fatigue.

In my analysis of platform-driven behavior, I’ve seen how misinformation spreads faster when users assume content is popular or endorsed. Algorithmic amplification, misunderstood as organic consensus, causes people to believe distorted narratives. Social consequences emerge when platforms reinforce narrow views, even unintentionally.

At a social level, algorithm confusion contributes to division and echo chambers. Communities receive different versions of reality depending on their engagement patterns, making constructive dialogue harder. The platform design unintentionally segments the public into self-reinforcing content bubbles.

Misinformation Amplification

Confusion about source credibility leads users to believe that repeated content must be true. Algorithms prioritize engagement over accuracy, so misleading content with high shares or likes surfaces more than reliable but less engaging posts.

Emotional Polarization

Emotionally charged content, especially outrage-driven posts, tends to perform well in engagement metrics. Algorithms surface such content more frequently, influencing user sentiment. This increases hostility, tribalism, and reduces space for moderate or thoughtful discussion.

How Can Users Better Understand and Manage Their Algorithm Exposure?

Improving algorithm literacy involves recognizing how platform behavior shapes feed content and taking deliberate steps to regain control. By tracking personal interactions and using tools like content controls, users can begin to disrupt unwanted personalization loops.

From my personal strategy, I recommend experimenting with account behavior to test influence. Actively search for diverse content, mute repetitive categories, and use “not interested” buttons to reshape recommendations. These steps don’t eliminate algorithm influence but help redirect it.

Transparency tools are increasingly offered by some platforms, though often buried in settings. Learning to navigate these controls empowers users to adjust preferences, limit data tracking, and influence algorithmic output. Self-awareness becomes the first step to digital empowerment.

Manual Customization Features

Features like “Follow First,” “Favorites,” or “See Less Often” give users manual control over parts of their feed. When used consistently, these tools push the algorithm to adapt to newer signals and reduce irrelevant or polarizing content.

Behavioral Feedback Loops

Understanding feedback loops helps users manage what they consume. Engaging repeatedly with similar content reinforces patterns, so breaking loops requires conscious engagement with different voices, topics, and formats even if not immediately appealing.

What Responsibilities Do Platforms Have in Reducing Confusion?

Platforms bear ethical responsibility to reduce confusion by improving transparency, providing user education, and making algorithmic signals more visible. When platforms prioritize ease of use over understanding, they leave users vulnerable to misinterpretation and manipulation.

Having followed platform updates for years, I’ve seen moments where clear communication drastically improved user trust such as explicit alerts during algorithm tests. When platforms share the “why” behind changes, users respond more thoughtfully and less reactively.

Accountability doesn’t require exposing source code, but rather offering contextual signals. Showing why content appears (“based on your watch history”) or offering audit trails could build transparency. Without these cues, platforms risk losing user loyalty and regulatory trust.

Transparent Explanation Interfaces

Interface-level indicators, such as “Why am I seeing this?” or “Based on your behavior,” help close the gap between user perception and reality. These interfaces serve as digital mirrors, reminding users that their actions shape their feed.

Algorithmic Auditing and Ethics

Regular auditing ensures algorithmic fairness, balance, and unintended bias correction. Ethical teams within platforms can test algorithm impact on different demographics to ensure equitable content exposure and avoid reinforcing harmful stereotypes or narratives.

What is the Future of Algorithmic Literacy in the United States?

The future of algorithmic literacy depends on a combined effort from platforms, educators, and policy makers. As algorithms become more central to online life, the demand for public understanding will increase. Algorithm literacy must become as common as media literacy once was.

In schools, digital education curricula can integrate algorithm basics, showing students how platforms rank content and influence thought. As someone who’s worked with students and educators on this, I’ve seen the spark of awareness once learners realize they’re not passive consumers but active participants in shaping their digital world.

Government and advocacy groups are starting to push for algorithm transparency as a policy issue. The next decade will likely bring regulatory frameworks requiring platforms to explain and justify how they personalize content. For users, the key is staying curious, reflective, and aware of digital habits.

Education and Curriculum Development

Incorporating algorithm awareness into public education allows younger generations to grow up questioning content visibility. This shift empowers them to make informed digital decisions and identify when platforms may be shaping their worldview.

Legislative Oversight and Consumer Rights

Policies that mandate algorithm disclosures, user access to personalization logs, or independent audits could transform platform accountability. Giving users the right to know how decisions are made moves society toward informed digital citizenship.

Key Drivers of Platform Algorithm Confusion

| Cause | Description |

| Lack of transparency | Users are not told how content is chosen |

| Misleading labels | Phrases like “Trending” or “For You” mask curated feeds |

| Behavior-based personalization | Invisible signals like pause time shape what users see |

| Frequent algorithm changes | Platforms change systems without warning |

| Engagement over relevance | Content is ranked by interaction, not importance |

Conclusion

Algorithm confusion stems from a gap between expectation and operation. Users assume control, but platforms prioritize prediction and engagement. The misunderstanding leads to mistrust, polarization, and manipulation. By exploring algorithmic design, user behavior, and platform responsibility, clarity begins to form. The future calls for transparency, literacy, and shared accountability. When users learn how their feed is built, they reclaim power in digital spaces and can navigate content more critically, confidently, and consciously.

If you want to explore how we help businesses grow from the ground up, you can visit yourbusinessbureau.com to see what we offer.

FAQ’s

Your behavior signals, like what you watch or pause on, are tracked and used to predict your interests. The system reinforces similar content to maximize your engagement, creating a feedback loop.

Yes, but not entirely. Using tools like “not interested,” muting topics, or searching for diverse content helps reset patterns. Active engagement is necessary to shift what the algorithm shows you.

Algorithms are not inherently biased, but they can reflect human biases based on data input. Without oversight, these systems may amplify stereotypes, misinformation, or unbalanced narratives.

Many platforms avoid detailed explanations to maintain competitive advantage or simplify user experience. Full transparency could reduce user time spent or highlight vulnerabilities in the system.

Indirectly, yes. Algorithms prioritize content that performs well, and political content often has high engagement. This can create echo chambers that reinforce specific views and reduce exposure to differing perspectives.